Model-Market Fit: The New Make-or-Break for AI Startups

A new framework for AI founders and investors, and the real-world examples that prove it out.

The Framework Every AI Founder Is Missing

The market is full of AI startups with great products and real funding. Most deliver instant value. But the growth never locks in.

Deals slow down, expansion stalls, CAC keeps climbing. Is it product-market fit? Founder-market fit? Both can be true, and the system still won’t click.

There is a layer of fit beneath the surface that hasn’t been named yet. Until that missing floor is found, plenty of AI companies will look like they are winning right up until they stop.

The gap between a great model and a real market is often wider than it looks…

Before we dive in, a word from this week’s partner:

The best AI deals of the next decade are being funded right now, and most people will never see them.

This week, The VC Corner is giving readers early access to the same high-potential startup opportunities that elite venture firms are already co-investing in. You get to see the deals.

You decide whether to invest. No gatekeepers, no minimums to just look:

▫️ Curated deal flow of high-potential startups alongside leading VC firms including Andreessen Horowitz, Bessemer, and Y Combinator

▫️ No obligation to invest

▫️ No cost to see the deals 👇

Table of Contents

1. The Fit Stack: Three Frameworks, One Missing Floor

2. The Capability Threshold: Why Timing Is Everything in AI

3. Three Companies That Got It Right

4. The Business Model Layer: Technical MMF Isn’t Enough

5. What to Do With This as a Founder or Investor

6. The Full Stack

1. The Fit Stack: Three Frameworks, One Missing Floor

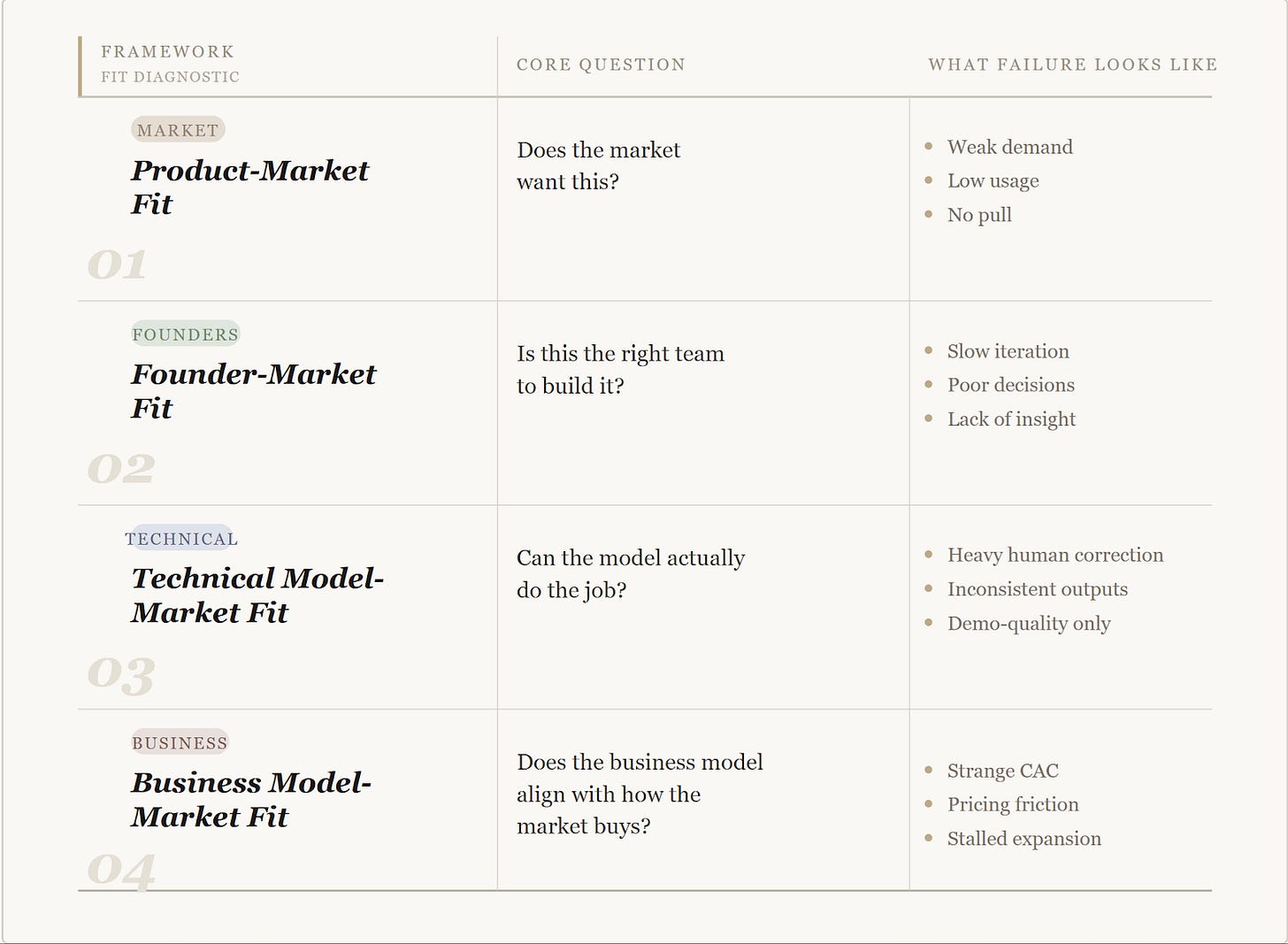

Both product-market fit and founder-market fit are great frameworks to use when building a startup. These are good questions, but they skip a step that is specific to AI.

They assume the product can actually deliver what the market is asking for, and that the way you plan to sell it fits how that market behaves.

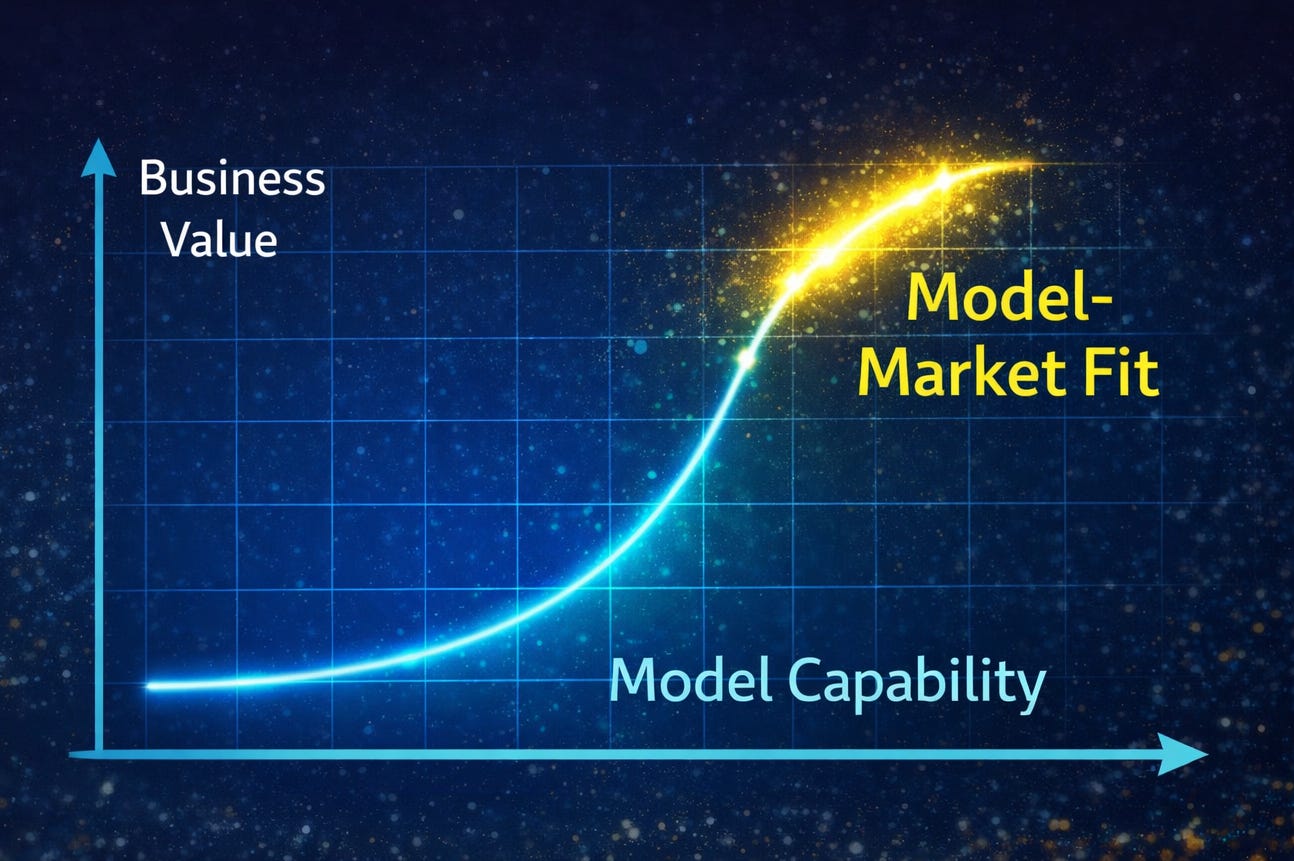

So here’s the new framework: model-market fit. It is the foundation that holds everything else up and it has two parts that need to be separated.

The first is technical. Can the model perform the core task to a standard a customer would accept without constant correction?

The second is commercial. Does the way you price, package, and deliver the product align with how that market buys, budgets, and expands spend?

Technical model fit determines whether the product can work at all.

Business model fit determines whether it can scale inside a real market.

You can have a model that performs well and still fail because the business model doesn’t align with how customers actually buy. You can also have a clean commercial structure and still fail because the system cannot do the job reliably.

Most AI startups that stall are not failing because demand is absent but because one of these underlying layers is missing, even if everything above it looks right.

2. The Capability Threshold: Why Timing Is Everything in AI

A market cannot pull a product that cannot do its job. It sounds simple, but it is exactly where many AI founders get stuck.

You can have the budget and the interest, but if the model fails at the core task, no one will truly rely on it.

What looks like a lack of demand is often just a model that has not crossed the capability threshold yet.

Nicolas Bustamante wrote about this recently, saying that before anything else, the tech has to actually work.

When “good enough” is not enough

AI does not improve in a way that is easy to predict. A model can look impressive in a demo for months without being usable in the real world.

That’s until something clicks. Better reasoning or fewer mistakes turns the tool from an experiment into a necessity. This change is not small. It is the moment the workflow can finally hold up on its own.

The gap between 80% and 99% accuracy is where businesses live or die. In low-risk jobs, a few mistakes are fine. In areas like law, medicine, or finance, being wrong has real consequences.

Until the model hits that higher bar, the market stays out of reach. You might be able to show a great demo, but you cannot actually deploy it.

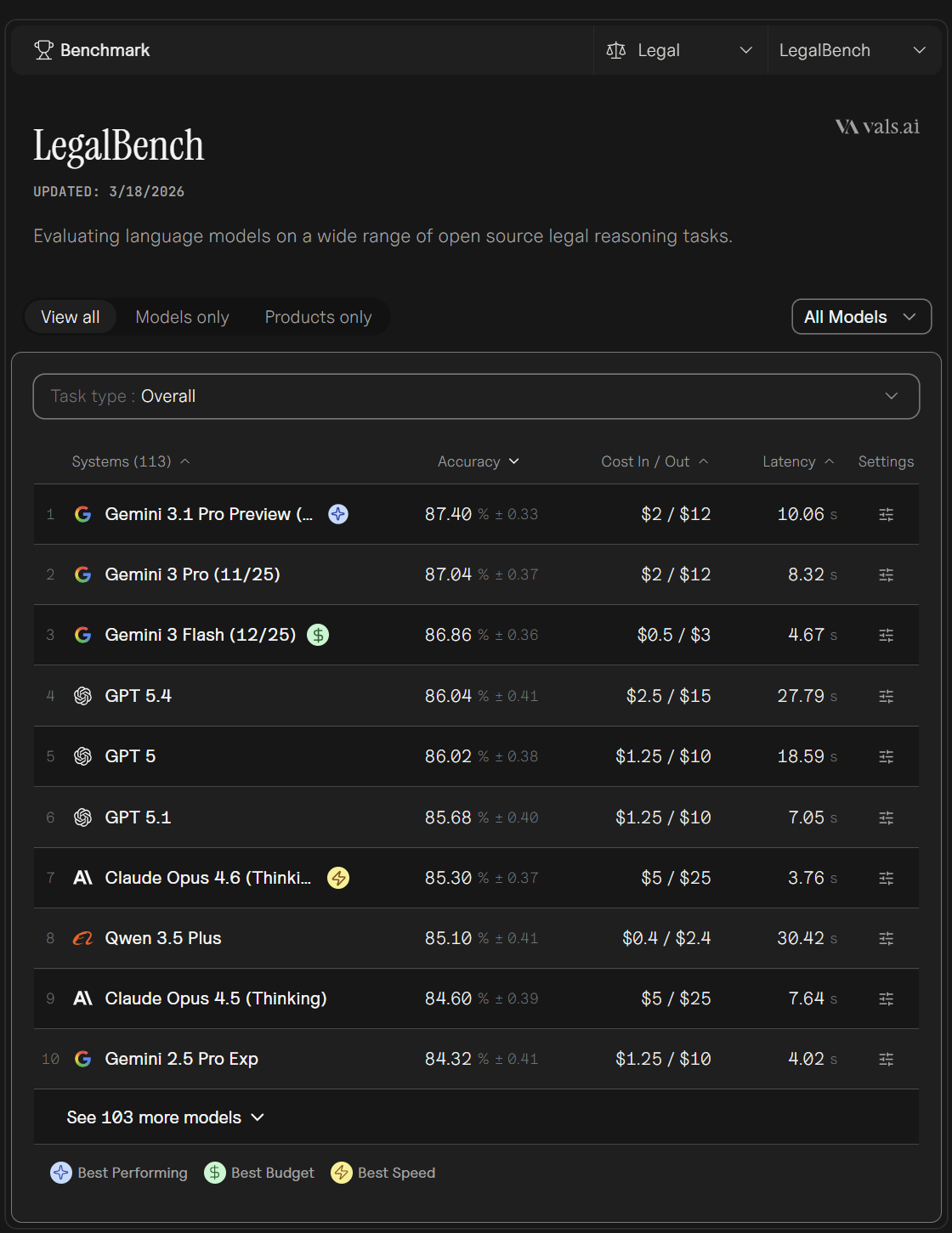

What the benchmarks actually tell you

Benchmarks only matter when interpreted in context. On benchmarks like LegalBench and Vals-style evaluations, performance moving into the high 80s starts to resemble levels that can support real legal workflows.

Though that level does not remove human review, it reduces it enough to make the system economically viable. A lawyer can treat the output as a starting point that holds up under scrutiny.

Contrast that with finance-focused agents operating around 56% on similar tasks. At that level, outputs are too inconsistent to anchor real decisions.

You can demo it but you cannot deploy it. The difference between those numbers shows where a category begins to scale and where it remains stuck.

Human-in-the-loop: bridge or disguise

Many teams use humans to hide a weak model. They call it human-in-the-loop, but it is often just a disguise. There is a simple way to tell the difference. If you took away the human review today, would the customer still pay for the product?

If the answer is no, the model is not ready. You are just running a manual process with a digital interface.

Timing in AI is all about this threshold. The market is usually ready long before the product is. When the model finally becomes capable, adoption simply takes off.

The demand was always there, waiting for the technology to finally catch up.

3. Three Companies That Got It Right

The best way to see the capability threshold is to look at the exact moment a model becomes good enough to do real work. Once again, that moment is a sudden burst of speed, and that’s because the demand was already waiting.

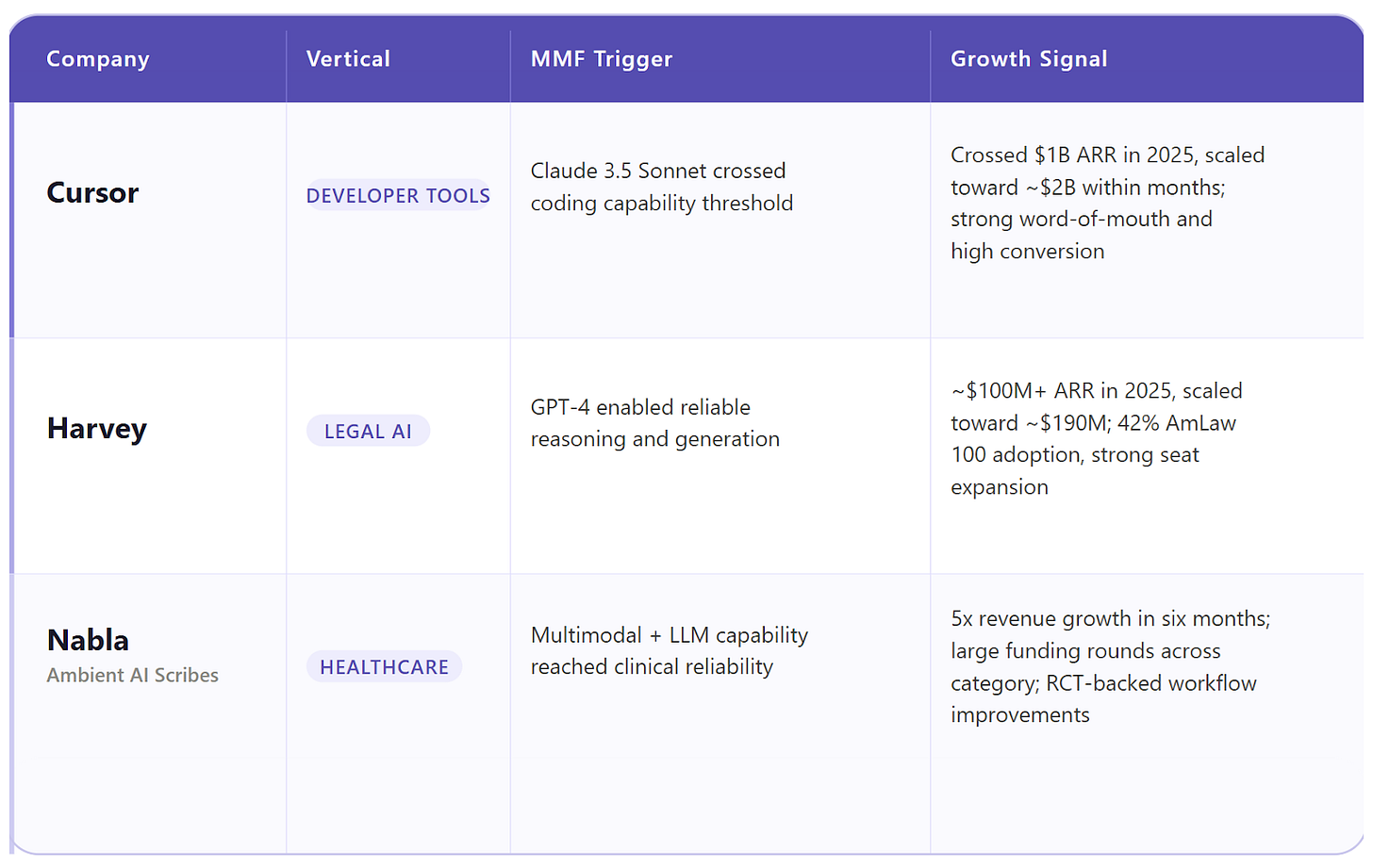

Cursor: when the model caught up

Cursor growth did not begin with a new strategy. Early versions worked, but they felt limited. Developers could experiment with it, not depend on it. The constraint was simple: the model could not reliably handle real coding workflows.

The inflection point came in June 2024 with Claude 3.5 Sonnet. The product stayed the same, the model didn’t. And that changed everything.

Within months, the numbers moved. Cursor crossed $1B in ARR in late 2025 and doubled to roughly $2B within a single quarter, one of the fastest scaling curves seen in SaaS. It became the fastest SaaS company to move from $1M to $500M in revenue, with minimal marketing and strong word of mouth. Its freemium conversion rate hit 36%, far above the typical 2% to 5%.

This is not execution-driven growth. It is what happens when model-market fit is unlocked and the market starts pulling.

Harvey: when trust unlocked spend

Harvey AI sits in a category where accuracy is non-negotiable. Earlier tools could assist with narrow tasks, but they could not support real legal workflows.

GPT-4 changed that in March 2023. It crossed the threshold where reasoning and generation became reliable enough for professional use.

Harvey’s growth followed. After reaching roughly $50M ARR at the end of 2024, the company crossed $100M in 2025 and scaled to around $190M soon after, serving over 500 customers including 42% of the AmLaw 100. Weekly active users grew fourfold within a year.

The more telling signal shows up in expansion. Median seat count doubles within twelve months. Once the output holds up under scrutiny, usage compounds.

Ambient AI scribes: when a category turns real

Ambient AI scribes address a problem that has existed for years. Doctors spend hours on documentation. The demand to remove that burden has always been clear.

The constraint was capability. Capturing conversations, structuring them into clinical notes, and doing it reliably enough for healthcare settings required a level of performance that did not exist. That changed with newer large models and multimodal systems.

The shift showed up quickly in the numbers. Funding in the category has accelerated sharply, with multiple companies raising large rounds as adoption across health systems picks up. Nabla reported 5x revenue growth within six months. A randomized trial in NEJM AI, covering 238 physicians and over 48,000 visits, showed measurable improvements in documentation workflows.

In healthcare, validation moves slowly. Healthcare does not move this fast. When clinical evidence appears this early, it signals that the threshold has been crossed.

Across all three cases

In each case, demand was already there. Developers wanted a reliable coding partner. Lawyers wanted automation that reduced review. Doctors wanted relief from documentation.

None of these companies created that demand. The model finally became capable of meeting it.

The rope was always there. Once model-market fit was reached, it pulled tight.

4. The Business Model Layer: Technical MMF Isn’t Enough

Building an AI model that actually works is a massive win, but it doesn’t guarantee a real business.

This is where the second layer of fit often fails, and it usually happens without the founder noticing. A startup can have a great product and happy users but still struggle to actually scale.

When growth stalls, people usually blame the sales team. In reality, the way the company is built is simply fighting the way the market works.

Why the math stops making sense

Every industry has a specific way of handling money. If an AI tool ignores those rules, everything feels difficult.

For example, if a market is used to paying for a finished result but a startup insists on a monthly subscription, customers will hesitate. It makes the pricing feel like a constant debate instead of a simple choice.

There is also the issue of cost. Running high-end AI is not free. In some industries, those costs are easy to pass on. In others, where margins are already razor-thin, adding a massive model cost can make scaling feel like a burden instead of a victory.

If a product saves a user ten minutes but costs the company five dollars every time they click a button, the business is upside down.

Stop blaming the sales team

Here’s the thing, structural failures do not look like big strategic errors. They show up as small, annoying problems.

Customer acquisition costs start to climb, users will churn for no clear reason, and deals take six months to close instead of two.

The usual response is to hire more salespeople or rewrite the pitch decks. But those are just band-aids. You cannot out-hustle a bad business model. If the pieces are aligned, the market actually helps the company grow.

The pricing fits right into existing budgets and expansion happens because it makes sense for the customer. If they aren’t aligned, every single dollar of revenue will feel like a fight.

Technical fit gets a foot in the door, but business model fit is what keeps the lights on.

5. What to Do With This as a Founder or Investor

If this doesn’t change how you make decisions, it’s not useful. The most practical way to use it is through a few hard questions that force clarity.

For founders: what are you actually betting on

Before building, answer three questions honestly.

If your model receives the same inputs as a human expert, can it produce output a customer would pay for without significant correction?

If all human review from the workflow is removed, does the product still function in a way a customer finds useful and reliable?

Does your pricing and distribution align with how this market actually buys and allocates budget?

If any answer is “not yet,” the idea may be right but the timing probably isn’t. But your AI startup strategy needs to reflect what you are waiting for.

Then comes the harder question.

Are you building for current capability or anticipated capability?

There is a narrow window where being early works. The risk sits further out. When capability is still two to three years away, the product can feel close enough to justify investment but not close enough to sustain traction.

Teams in that zone often spend multiple cycles refining something the market cannot yet use.

The way through is not to pause or wait it out. It’s to build assets that matter once model-market fit is unlocked.

Domain expertise that captures edge cases, data pipelines that improve output quality over time, relationships that create trust before scale, and workflow integrations that make adoption easier when the product is ready. These are the pieces that turn timing into advantage.

For investors: what to look for beneath the demo

From an investor’s perspective, this framework explains a familiar pattern. The product demos well, early users engage, and the narrative is strong. The business, however, does not convert that into durable growth.

Investors need to ask three key questions.

The first question is where the model stands.

Does the model actually perform on the specific job it was built to do?

The second is about human involvement.

Is it narrowing the gap to usability, or compensating for a system that cannot yet deliver?

The third sits at the business layer.

Does the company’s model align with how this market distributes budget and expands spend?

Over time, a pattern emerges. The companies that win after model-market fit are not always the earliest entrants. They are the ones that built depth, trust, and infrastructure while others chased surface traction.

Being early and being ready rarely happen together. The best teams learn how to make them meet.

6. The Full Stack

The stack is simpler than it looks, and less forgiving than most founders assume. The takeaway is this:

Technical model fit determines if the product works at all.

Business model fit determines if the market will carry it forward.

Only after those layers are solid does the rest of the company begin to compound. Product-market fit is really just the result of getting these foundations right.

The most dangerous place to be is right in the middle. These are the companies where the tech is good, but the business model is at war with how the industry actually works.

From the outside, it looks like a failure of effort or a bad sales team. On the inside, the signals are just messy and hard to read. The problem is not the talent but alignment.

Next time you see a strong product with strange numbers or confusing costs, do not blame the team first. Look at the stack instead. Ask which layer is missing. It might be that the floor was never there to begin with.