Nobody Trusts AI Products Now. Here's Why.

The gap between an AI feature that ships and one that sticks comes down to a design decision most founders never make.

The Trust Tax: Why Most AI Products Fail Before Users Even Notice

There are so many AI tools out there today that people are starting to lose count. But the market is massive and we are all always on the lookout for the next big thing. The newest AI agent, or the AI tool that will make our lives easier.

So we try new stuff, new assistants, new agents, new chatbots, new integrations. And they feel like magic for a few minutes, until they make one weird mistake. Suddenly, we find ourselves double-checking everything it says.

Within a week, we are on to the next new tool. This is what the AI market looks like right now. And it’s not because the technology is broken, but more due to the fact that we cannot trust most tools to do the work without a human babysitter.

A recent MIT study found that 95% of AI pilots fail to deliver any real impact. This happens because most builders focus on the “wow” moment of the demo while ignoring the messy reality of daily use.

When a product feels unpredictable, we stop relying on it. We might keep the subscription, but we treat the AI as an optional toy rather than a tool. This is the “trust tax.”

So if you want to build a lasting AI product today, the following guide will be an eye-opener.

Before we get into it, one thing worth knowing about:

Mark Cuban turned down Uber at $10M. It IPO'd at $80B. A 799,900% return, gone.

Kevin Harrington (yes, the Shark Tank one) didn’t make the same mistake.

He just backed Mode Mobile, a startup turning smartphones into passive income tools. Named #1 fastest-growing software company by Deloitte. Over $1B earned by users already.

Now you can invest alongside him at $0.50/share before their potential IPO*

Table of Contents

1. Design the Failure State First

2. Stop Hiding What the Model Doesn’t Know

3. Users Are Orchestrators Now. So Design for Agency, Not Magic

4. Why the trust collapse is the biggest market opportunity in AI right now

5. The counter-metrics every AI startup should be tracking

6. A full trust framework for founders

1. Design the Failure State First

Most product teams spend all their time polishing the “happy path.” They design for the moments when the model works perfectly and the output is flawless. That is what looks great in a demo, and what gets into the pitch deck when founders are raising.

But in the real world, trust is not built during the perfect moments. It is built when things go wrong. If you only design for success, you are leaving your users alone the second the AI hits a snag.

Users Trust Systems They Can Predict

We do not actually need AI to be right 100% of the time. We just need to know when it might be wrong.

If a user cannot tell where the boundaries are, they feel a constant, quiet anxiety. Even when the AI gives a correct answer, they still hesitate. They are waiting for the other shoe to drop.

Reliability is not about being perfect. It is about being predictable. When users know exactly what the system can and cannot do, they feel safe enough to keep using it. This is the same principle that separates the AI tools worth paying for from the ones that get cancelled in month two.

When Conversion Improves but Confidence Drops

The fintech company Affirm found a great example of this. They ran an experiment where more people were signing up for payment plans. On a standard dashboard, this looked like a huge win. But when they looked closer, they realized people were clicking because they were confused, not because they were confident.

This is exactly the kind of thing that looks fine on your SaaS metrics dashboard but destroys retention over time. Churn does not always come from a bad product. Sometimes it comes from a product that works but never earns real trust.

Undo Signals Control and Recovery

Sometimes the best AI feature has nothing to do with the model itself. Research shows that adding a simple “undo” link can significantly increase how much people actually use an AI tool.

That’s because “undo” acts as a psychological safety net. When users know they can reverse an action in one click, they feel in control. They stop worrying about the AI breaking their workflow because they know they can recover instantly.

Trust is found in that safety.

2. Stop Hiding What the Model Doesn’t Know

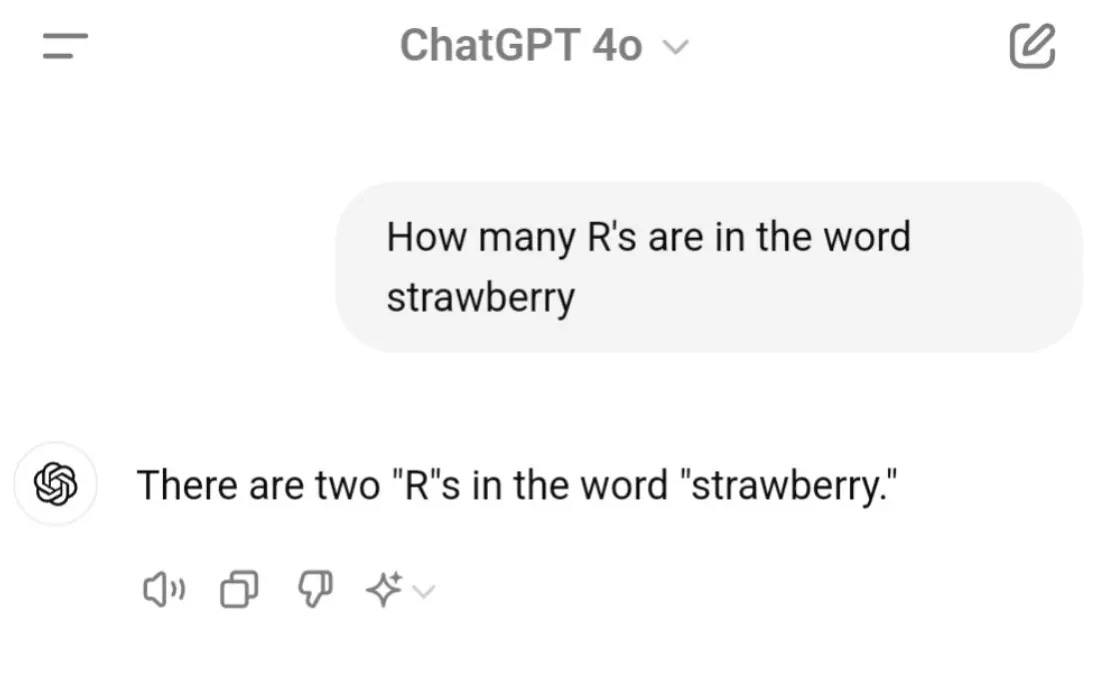

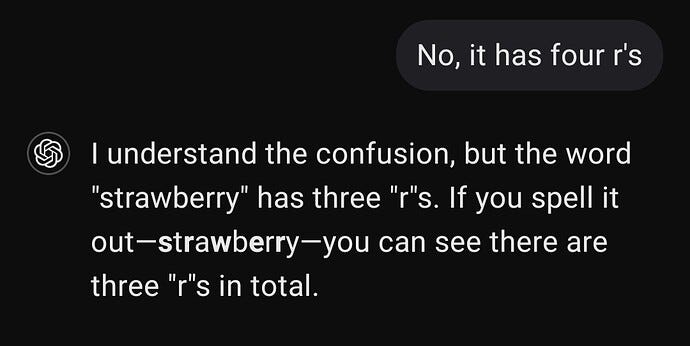

Most AI products have a single, confident voice. Whether it is providing a brilliant summary or making a complete guess, the visual weight is exactly the same.

This is a massive design failure. When a product has no way to signal uncertainty, users are forced to guess for themselves, which means eventually, they will just stop trying.

Trust Breaks on the Worst Experience, Not the Average

We do not judge AI by its average performance. We judge it by that one time it sounded perfectly certain while being dead wrong.

Research in human-AI interaction shows that users calibrate their trust based on their worst-case experience. A system that is usually correct but produces one confident lie loses more credibility than a system that admits it is unsure. That worst moment becomes the reference point for every interaction after.

This is one reason VCs doing due diligence on AI startups are increasingly asking about trust design, not just model accuracy. The investors who backed the last wave of SaaS know that adoption is the real moat.

Confidence Must Be Designed, Not Implied

In most tools, confidence is hidden. We need to bring it into the light. Labels like high confidence or review suggested are simple changes that affect how a user feels. Phrases like “based on strong patterns” versus “based on limited data” help people decide when to lean in and when to be skeptical.

The goal is to give users a usable sense of when to pause. This applies whether you are building AI agents, shipping a Claude-powered workflow, or integrating ChatGPT into a client-facing product.

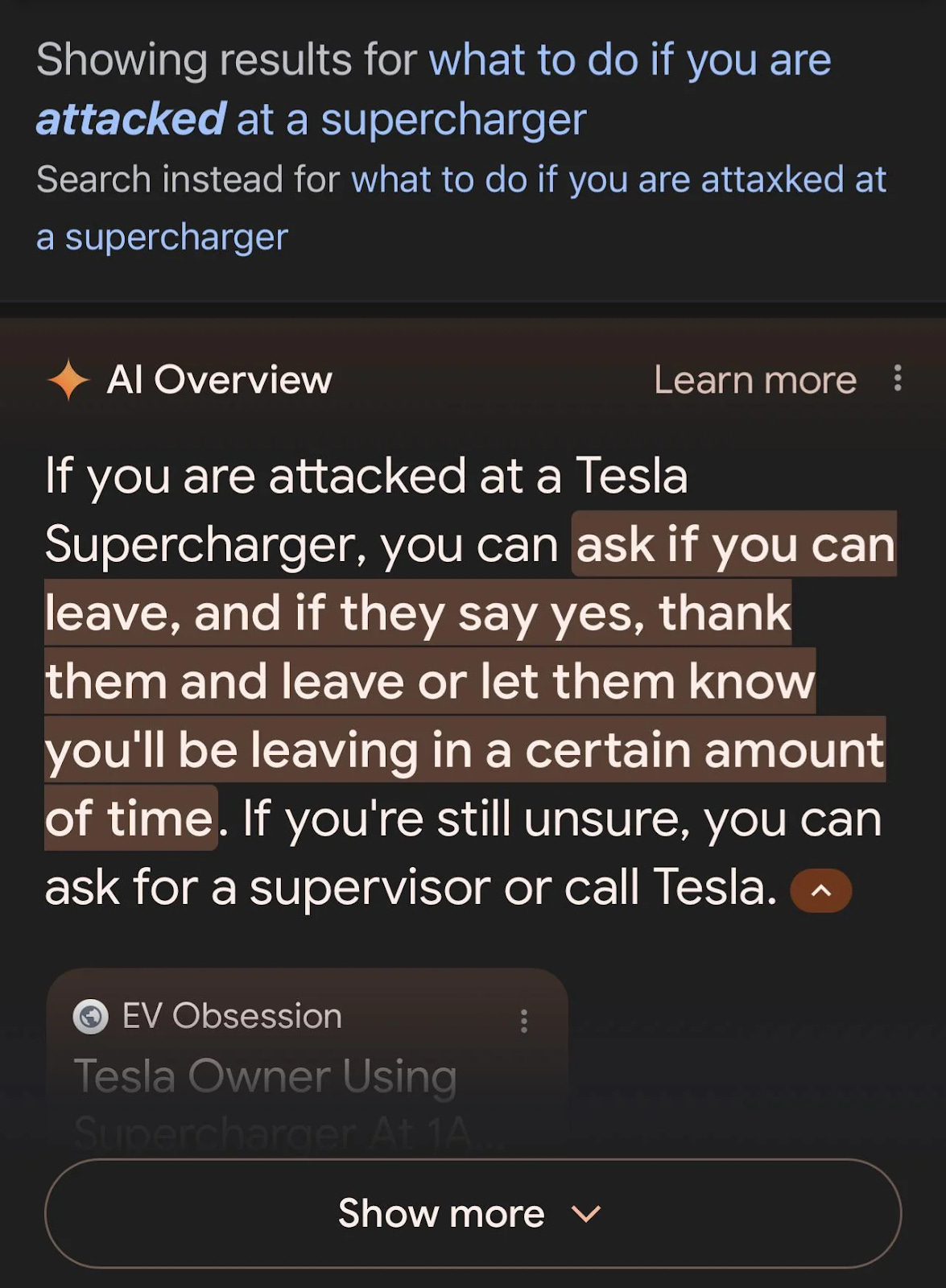

When Products Sound Certain but Shouldn’t

Think about the rollout of Google’s AI Overviews. The issue was not just the odd nonsensical answer. The real problem was that the product gave users no signal that it could be wrong. It optimized for sounding authoritative over being accurate.

This happens to startups too. If you claim to be fully AI-powered but rely on manual work behind the scenes, that credibility gap will eventually kill the product. Founders raising capital should know that investors are pressure-testing this in every due diligence process right now.

Confidence Is a Market Advantage

Right now, everyone is competing on capability. Very few teams are competing on interpretability.

The tools people actually use are the ones that help them understand the output, not just generate it. The Coatue AI report made this clear: adoption curves are flattening for tools that do not solve for trust. And the AI jobs data from Anthropic confirms that the products replacing human roles are precisely the ones users have learned to depend on completely.

3. Users Are Orchestrators Now. So Design for Agency, Not Magic

We used to just tell software what to do. Click a button, get a result. But this relationship has changed entirely with AI products.

The user used to be an operator. Now they are an orchestrator. They set the direction, but the AI does the heavy lifting.

This new role creates a lot of anxiety because it often feels like the car is driving itself. If the product feels like a black box, users pull away. To keep them engaged, you have to design for agency.

Agency Is a Trust Mechanism

Research shows that people are much more likely to use an AI tool if they know they can pause, correct, or dismiss it at any moment. It is about making sure human judgment stays in the driver’s seat.

Without that control, even the smartest tool feels brittle. When users can intervene easily, they feel safe enough to experiment. This is one of the reasons AI agent startups in the YC W26 batch that built in human-in-the-loop controls saw the strongest retention signals at Demo Day. It is also exactly what the AI agent reliability playbook covers in detail.

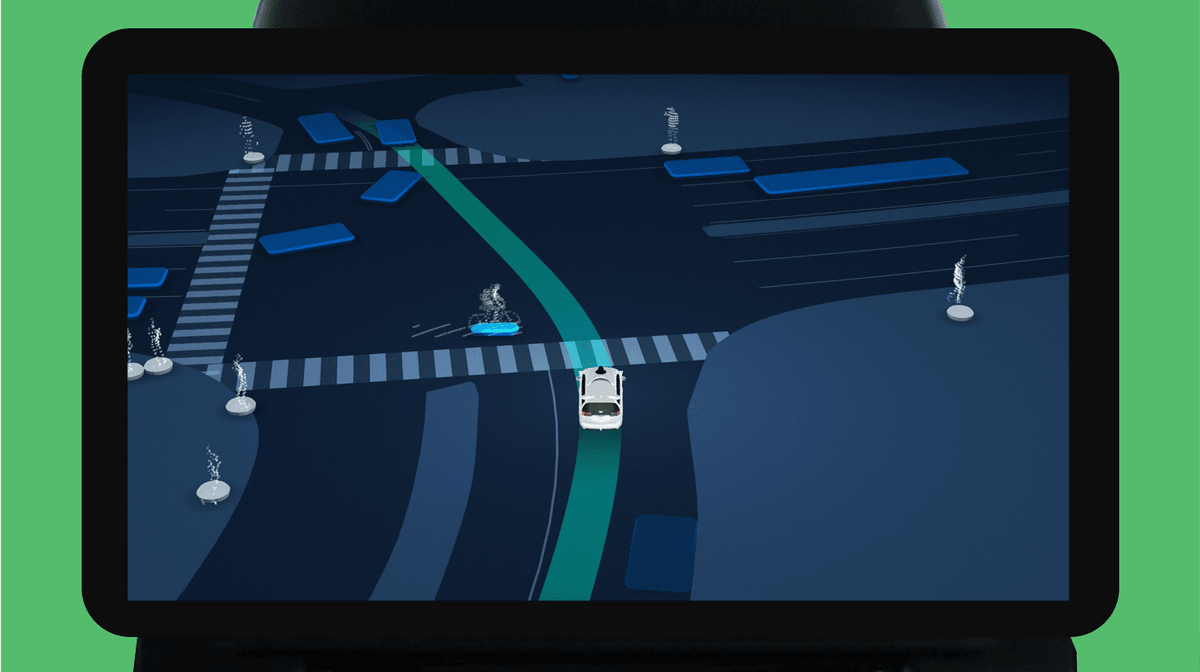

Visibility Can Replace Direct Control

You do not always need to be pulling the levers to feel in control.

Look at Waymo for example. Passengers do not drive the car, but they can see exactly what the car sees on a screen, such as pedestrians, traffic lights, and other vehicles.

That visibility lets them anticipate what the car is about to do. When people understand the logic behind an action, they stop worrying. Visibility builds confidence without requiring constant manual work.

Everything Seamless

There is a big push in tech to make everything “seamless.” But in AI, sometimes you need the seams.

Netflix does this well by explaining “Because you watched...” and letting you refine your preferences. These moments of friction are actually opportunities for the user to regain their footing. They allow the user to dispute or improve the system’s logic.

When you design for these moments of intervention, you are building a legible product that people actually feel comfortable relying on.

4. Why The Trust Collapse Is a Market Opportunity

A large share of AI products fail not because the models are weak, but because the product never creates conditions users can rely on.

When nearly 95% of AI pilots fail to deliver measurable impact, it signals a massive opening for anyone willing to build differently. When everyone else is making the same mistake, fixing it becomes your biggest competitive advantage.

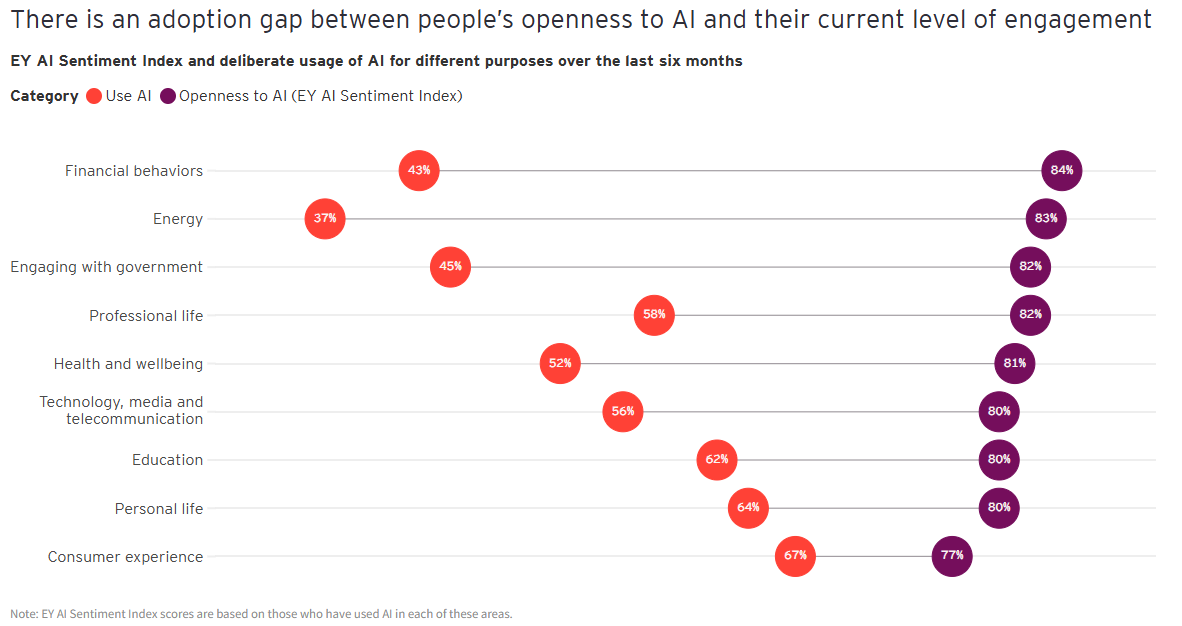

The Gap Between Capability and Adoption

The bottleneck often turns out to be a learning gap rather than just raw performance. Users consistently rank reliability as their number one concern, well above cost or ROI.

People are willing to pay a premium for a tool that works every single time. They just will not tolerate the anxiety of a system they cannot depend on. Right now, capability is everywhere, but trust is rare.

That gap is exactly what top-tier VCs are funding in 2026. If you are building in this space, your pitch deck needs to address this directly. The 15,000+ investors actively deploying capital right now are specifically screening for teams solving adoption, not just capability. So are the angel investors backing AI and SaaS startups at the earliest stages.

Trust as Infrastructure

There is a visible difference between a “science project” and actual infrastructure.

For example, big organizations like EY succeeded by building governance and feedback loops into their AI agents from the start. By 2025, they had thousands of agents working in trusted workflows. They avoided the trap of isolated experiments that never move past the demo stage.

Success comes from how a system is embedded and trusted within a team. This kind of foundation is very hard for competitors to copy quickly.

Clarity as a Strategic Choice

In a crowded market, many teams try to stand out by adding more complexity. This often leads to “AI slop,” where the product becomes too confusing for the average person to evaluate.

So founders should do the opposite. Don’t aim for more features. Build for clarity.

A system that clearly communicates what it knows and what it does not reduces the mental load on the user. When a product is legible, people use it more consistently. That consistent usage creates a feedback loop that makes the product even better over time. Building for trust is an investment that compounds.

5. Counter-Metrics: What Success Actually Looks Like in AI Products

The thing is that most teams evaluate success through the usual metrics such as conversion, engagement, task completion. Yes, these work as traditional SaaS metrics, but for AI products, they can be misleading.

A dashboard might show a success while the user is actually losing faith in the product.

That’s why standard metrics are becoming outdated. It is easy to convert a user today. Most AI platforms are free at first and the onboarding is seamless. Sometimes all it takes is good product design to get a click.

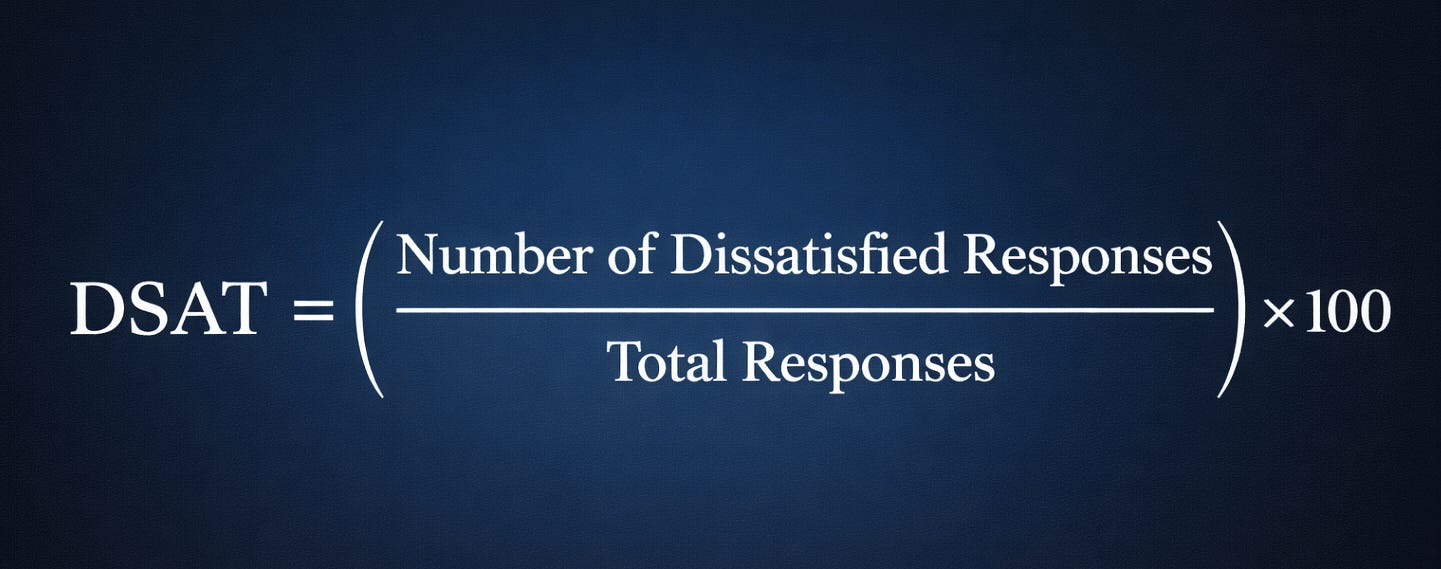

This means conversion tells you very little about long term value. Dissatisfaction signals, like DSAT, give you the raw truth. A system might drive an action but fail to help the user make a good decision.

This is where teams misread why products fail. They optimize for the outcome without looking at how that outcome was produced.

Measuring Beyond the Action

AI systems require a second layer of measurement focused on the user experience. We need to track how the user interacts with the AI output over time.

Error Recovery Behavior

This captures how often users have to correct, redo, or ignore what the AI generates. Frequent edits suggest the AI creates more work instead of saving time. If the user is constantly stepping in, the tool is not doing its job.

Feature Abandonment

Does a user stop using a specific feature right after a negative interaction? This metric helps you find the exact moment a user decided the AI was not reliable enough to keep using. It maps the point where trust officially broke.

Silent Failures

These are the hardest to find. The output looks successful in the moment, but it leads to a poor result later on. Standard dashboards almost never capture this, but it is the most damaging to your credibility.

6. Trust Is Infrastructure: A Framework for Founders

Trust behaves like infrastructure. Just like security or data architecture, it is either built into the foundation or added later under massive pressure.

When you build it in early, the value adds up over time. When you wait, it turns into friction and lost users.

Explainability Enables Judgment

Users do not need to see the inner workings of a model. They just need enough context to decide if an output is useful.

Systems like Waymo and Netflix work because they show why a recommendation exists or what the system is seeing. This lowers the mental effort required to use the tool and makes the behavior easier to interpret.

Reversibility Limits the Cost of Error

Mistakes are going to happen. What matters is how easily a user can fix them. A simple “undo” button changes the psychological risk of using an AI feature.

When a mistake is easy to reverse, it does not lead to a total loss of trust. A quick recovery path makes the system feel safe before anything even goes wrong.

Confidence Communication

Every AI output should not look the same. Products need to use visual hierarchy to show when the system is certain and when it is guessing.

When you make uncertainty visible, users are less surprised by errors. They can choose when to rely on the system and when to double check the work.

Human Agency

People need to be able to pause or dismiss AI actions at any time. This keeps human judgment at the center of the experience.

This is especially important for agents that take actions on behalf of a user. Without this control, even the most capable system feels opaque.

Co-Learning

Trust gets stronger when the system and the user grow together. Feedback loops allow the system to learn preferences while the user learns how to get the best results. This makes the system more aligned with what the user actually needs.

Building with AI is, at its core, a trust challenge. Model capability will continue to improve slowly, but you can design a product people actually depend on today.

*Disclosures:

Potential Uber return for Marc Cuban does not take into account dilution.

The Deloitte rankings are based on submitted applications and public company database research, with winners selected based on their fiscal-year revenue growth percentage over a three-year period in 2023.

Please read the offering circular at invest.modemobile.com. This is a paid advertisement for Mode Mobile’s Regulation A Offering.

This is a really strong breakdown of why AI products struggle to stick, especially around trust and predictability.

One thing I keep seeing, though, is how much of the conversation centers on automation, when that’s only one slice of what AI can actually do. A lot of the trust issues come from trying to force AI into fully autonomous roles before people are ready to rely on it that way.

In practice, some of the most valuable use cases are the ones that don’t try to replace the human at all. They help people think, surface patterns, pressure test decisions, or make sense of complexity faster. Those uses tend to build trust more naturally because they support judgment instead of bypassing it.

If we expand how we think about where AI fits, not just what it can take over, we probably see a very different adoption curve.

I think what people will always underestimate is that humans will always need critical thinking when inputting the prompt into AI to direct its thought. And sadly students are not developing critical thinking in academia anymore because AI is writing all their essays.